- April 9, 2026

- Maneesh Gupta

- 0

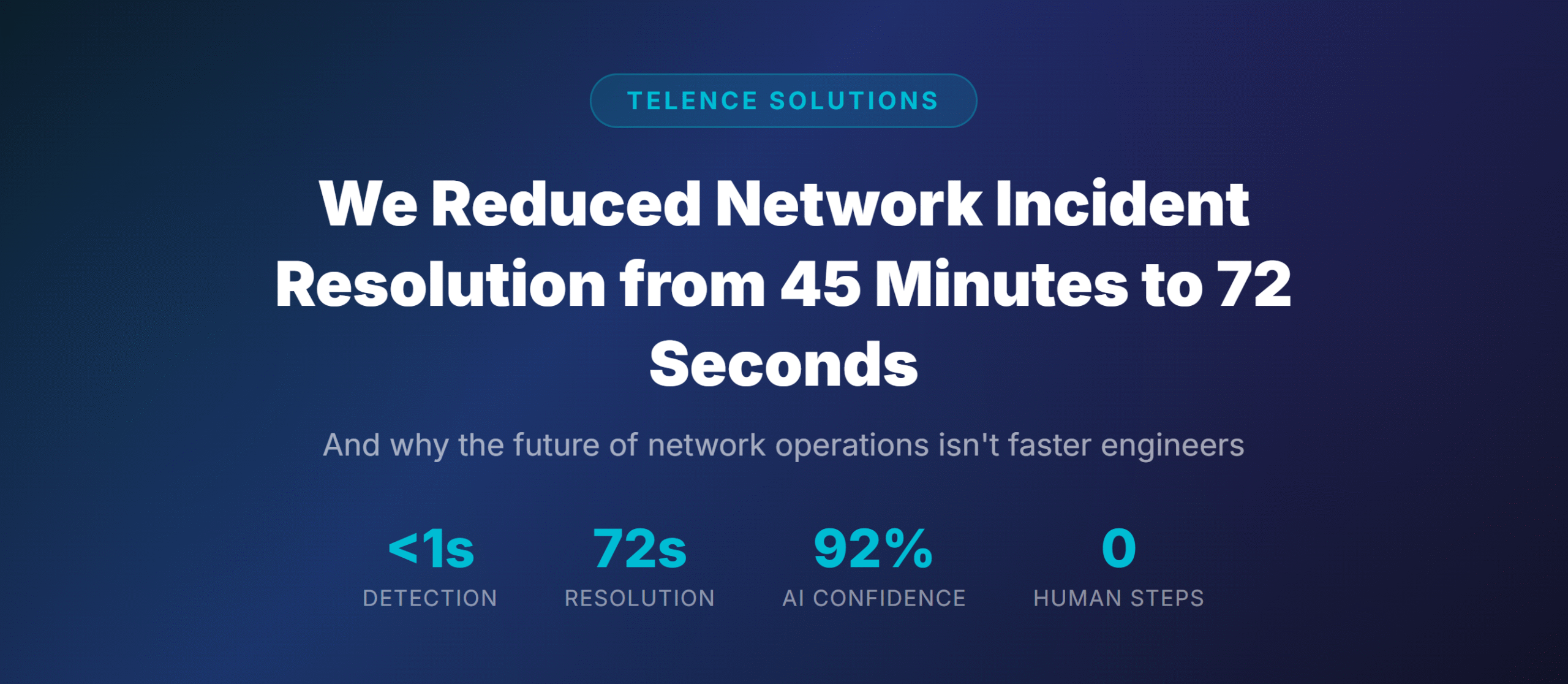

And why the future of network operations isn't faster engineers — it's no engineers at all.

It’s 2:47 AM. Your phone buzzes. A critical interface just went down on a spine switch in your data center. You squint at the screen, open your laptop, and begin the ritual that every network engineer knows too well.

Log into the monitoring dashboard. Check the alert. SSH into the device. Run show commands. Cross-reference with the CMDB. Check if anyone else is affected. Pull up the metrics. Read the logs. Form a theory. Test it. Fix it. Verify it. Document it. Close the ticket.

Forty-five minutes later, you’re done. The network is back. You’re exhausted. And you know this exact scenario will repeat itself — probably before the week is out.

This is network operations in 2026 for most organizations. And it’s fundamentally broken.

The Four Problems Nobody Talks About

We spend a lot of time in the networking industry talking about tools. New dashboards. Better alerts. Fancier automation. But we rarely talk about the structural problems that make network operations so painful.

- The first is alert fatigue. The average NOC processes thousands of alerts per day. Studies consistently show that 85% of these are noise — duplicate alerts, flapping interfaces, transient events that resolve themselves. But buried in that noise are the 15% that actually matter.

- The second is manual context gathering. When an incident does get flagged, the real work begins — and it’s not fixing the problem. It’s understanding it. Your engineer opens five, six, sometimes seven different tools. This process takes 30 to 40 minutes on average. The actual fix? Usually 5 minutes.

- The third is reactive posture. Traditional monitoring tells you what already failed. It doesn’t tell you what’s about to fail.

- The fourth is that we’re losing our best people. Senior network engineers didn’t sign up to be alert triagers. They burn out. They leave. And their institutional knowledge walks out the door with them.

What If the Network Could Heal Itself?

At Telence Solutions, we asked a different question. Instead of making engineers faster at incident response, what if we removed the need for human intervention entirely?

Not for every incident — that would be reckless. But for the 80% of incidents that follow predictable patterns, have known root causes, and well-established remediation procedures? What if an AI could handle those autonomously, with the same quality as your best engineer, in a fraction of the time?

Enter MCP: The Protocol That Changes Everything

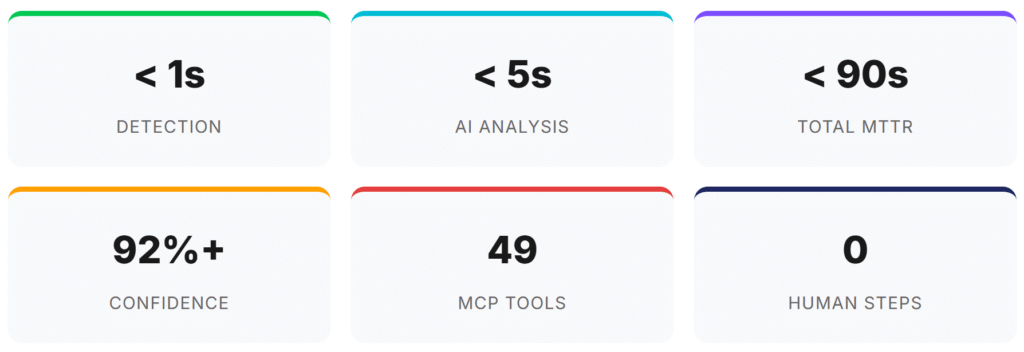

The breakthrough isn’t just AI — it’s how the AI connects to your network. We use the Model Context Protocol (MCP) to give Claude AI direct, bidirectional access to every component in the network operations stack.

Think of MCP as giving the AI hands, eyes, and ears. Through nine MCP servers, Claude can actively query your network devices (Cisco, Nokia, Juniper, Arista), pull real-time metrics from Prometheus, search logs in Loki, check device inventory in NetBox, execute remediation through Ansible, and annotate events in Grafana.

That’s 49 tools that Claude can call at any time, in any combination. This isn’t pre-scripted automation. Claude reasons about each incident fresh — in seconds instead of minutes.

72 Seconds: Anatomy of a Self-Healing Incident

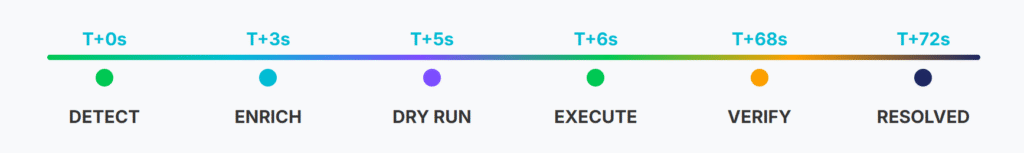

An interface goes down on a Cisco IOS-XR spine switch. Here’s what happens:

The 72-Second Breakdown

- Seconds 1-3: Claude makes 6 MCP tool calls — pulling device identity, topology, metric history, error counters, and logs. In 3 seconds, it has the context that takes a human 40 minutes.

- Seconds 3-5: AI reasoning. Pattern match, root cause analysis, blast radius assessment. Confidence: 92%.

- Seconds 5-8: Dry run (safety check), then execute via Ansible. Interface bounced.

- Seconds 8-72: Wait for convergence, verify metrics (UP, traffic flowing), annotate Grafana, commit incident report.

Total: 72 seconds. Zero human involvement. Complete audit trail.

Beyond Reactive: Predicting Failures Before They Happen

Self-healing is impressive. But prediction is transformative.

Using Prometheus predict_linear functions, Claude continuously monitors trends. When it sees CPU climbing steadily toward 90%, it doesn’t wait for the threshold breach. It calculates the trajectory, identifies a runaway process, and terminates it — all before any human would have noticed.

The shift from “we fixed it in 72 seconds” to “we prevented it 45 minutes before it would have happened” is the real transformation.

The Trust Architecture: AI with Guardrails

Every decision comes with a confidence score.

- Above 85%: auto-remediate.

- 70-84%: suggest fix, wait for approval.

- Below 70%: full escalation. You control the thresholds. Complete audit trail at every level.

This isn’t a black box. It’s a transparent reasoning engine with configurable autonomy.

The Numbers That Matter

If your organization handles 100 network incidents per month at 45 minutes each, that’s 75 hours of engineering time recovered — every single month.

What This Means for Network Teams

This isn’t about replacing network engineers. It’s about liberating them. When your senior engineers aren’t spending 70% of their time on repetitive incident response, they can focus on what they actually want to do: designing better architectures, planning capacity, and driving the strategic initiatives that move the business forward.

The NOC doesn’t disappear. It evolves. From a reactive war room to a proactive operations center where humans handle the complex, novel problems that genuinely require human judgment — and AI handles the predictable rest.

Next Steps

Want to see MCP-powered self-healing networks in action?

We run proof-of-concept deployments in two weeks.

At TelenceSolutions

We continue to help professionals build scalable, intelligent networks through real-world, hands-on learning — from OSPF and IS-IS fundamentals to BGP, SD-WAN, and AI-driven automation.